I am proud as hell to share the final “Champagne Moment” of FlowOS—my agentic framework built on Design Thinking, OKRs, and modified Kanban. I’ve moved past the “AI Chat” phase and into a fully realized, edge-deployed Operating System.

EN

DE

NL

The agentic swarm is based on a self-designed framework, about which I published a book, in cooperation with Claude Opus 4.7 https://futureorg.digital/wp-content/uploads/2026/04/Book_FlowOS_Framework.pdf

The agentic swarm was build in cooperation with an awesome tool, namded Tasklet.ai.

The Architecture: High-Performance Swarm Governance

-

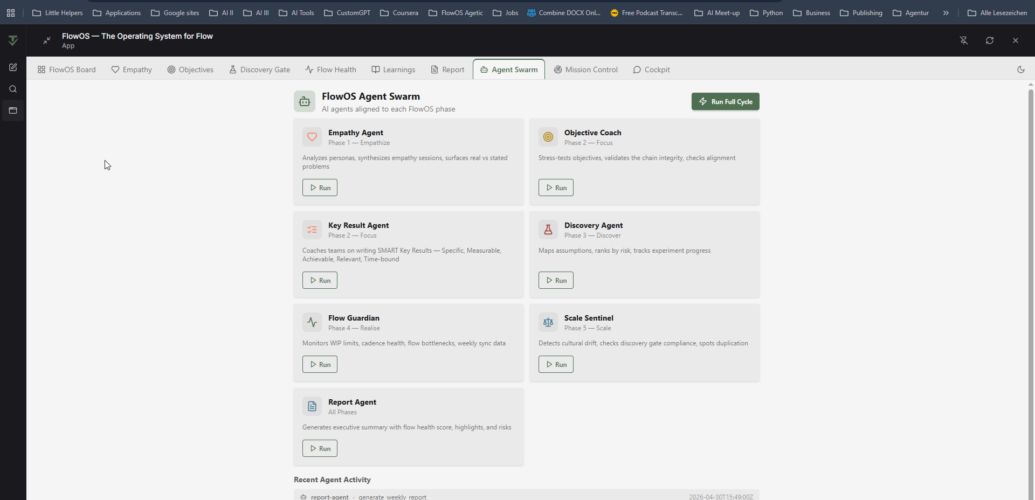

The Foundation: A “Naked Swarm” of specialized agents—including the Flow Guardian, Empathy Agent, and Objective Coach—all working within a unified framework.

-

The State Store: I’m using Google Drive as the central nervous system. Every agent, from the Scale Sentinel to the Report Agent, syncs its context and state to structured folders, creating a persistent, human-readable organizational memory.

-

The Headless Breakthrough: I just added an extra feature—a connector to Claude via Cloudflare Workers and Pages. Users can now prompt the swarm directly through the Claude interface.

The “50ms” Secret Sauce

By using Cloudflare Workers as a lightweight proxy, I’ve eliminated the dreaded “loading spinner”:

-

Instant Validation: It takes ~50ms to accept a question. The user gets an immediate response while the swarm processes asynchronously in the background.

-

Global Edge Deployment: No cold starts. The system feels local, even though a complex multi-agent dialogue is happening behind the scenes.

The “Lazy” High-Fidelity Demo

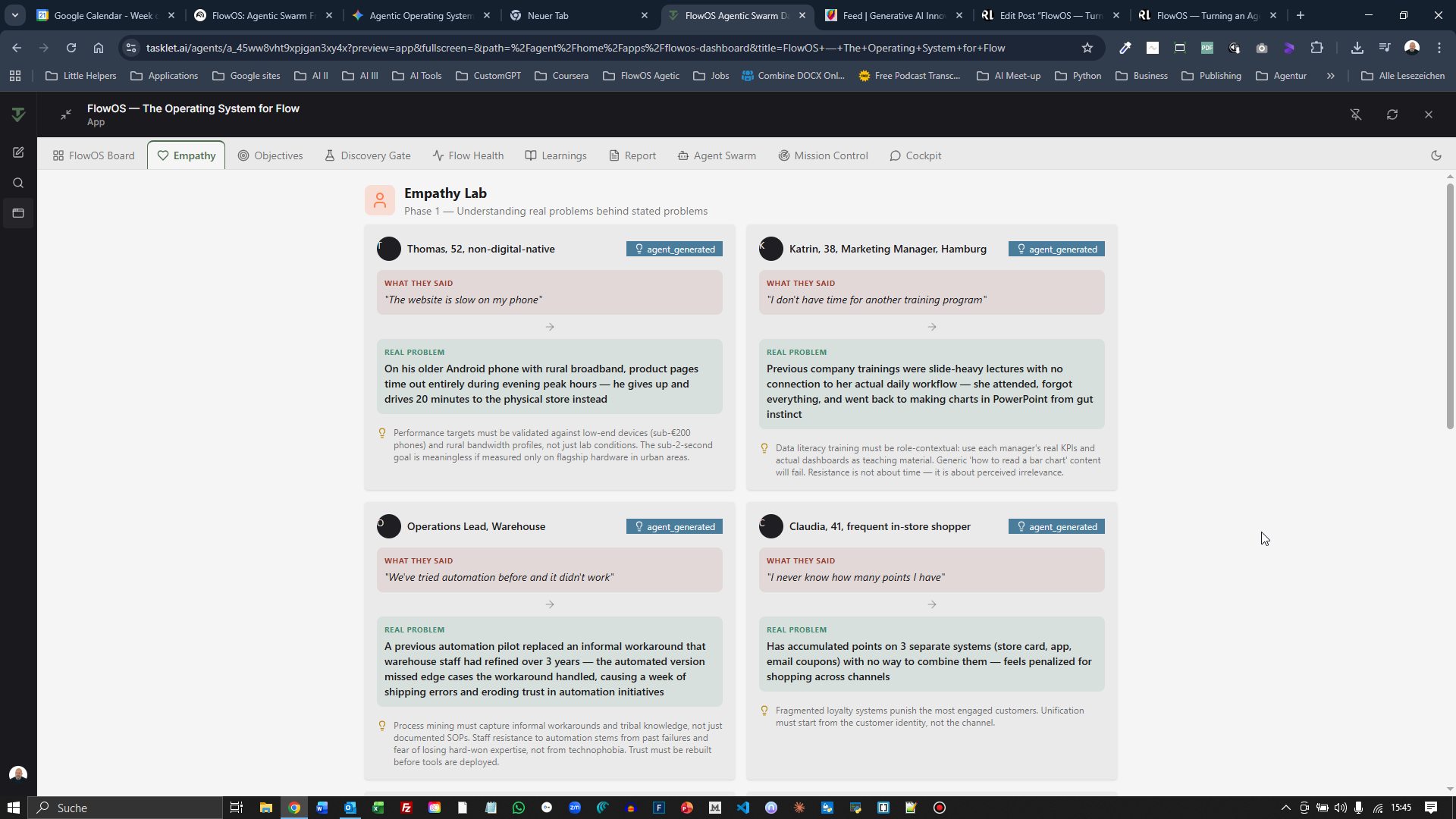

To demonstrate the system without the manual video editing grind, I fed my FlowOS book and screenshots into NotebookLM. It generated a wonderful, engaging deep-dive video that perfectly captures the “human” side of the Empathy Lab and the Objective Chain.

The Result

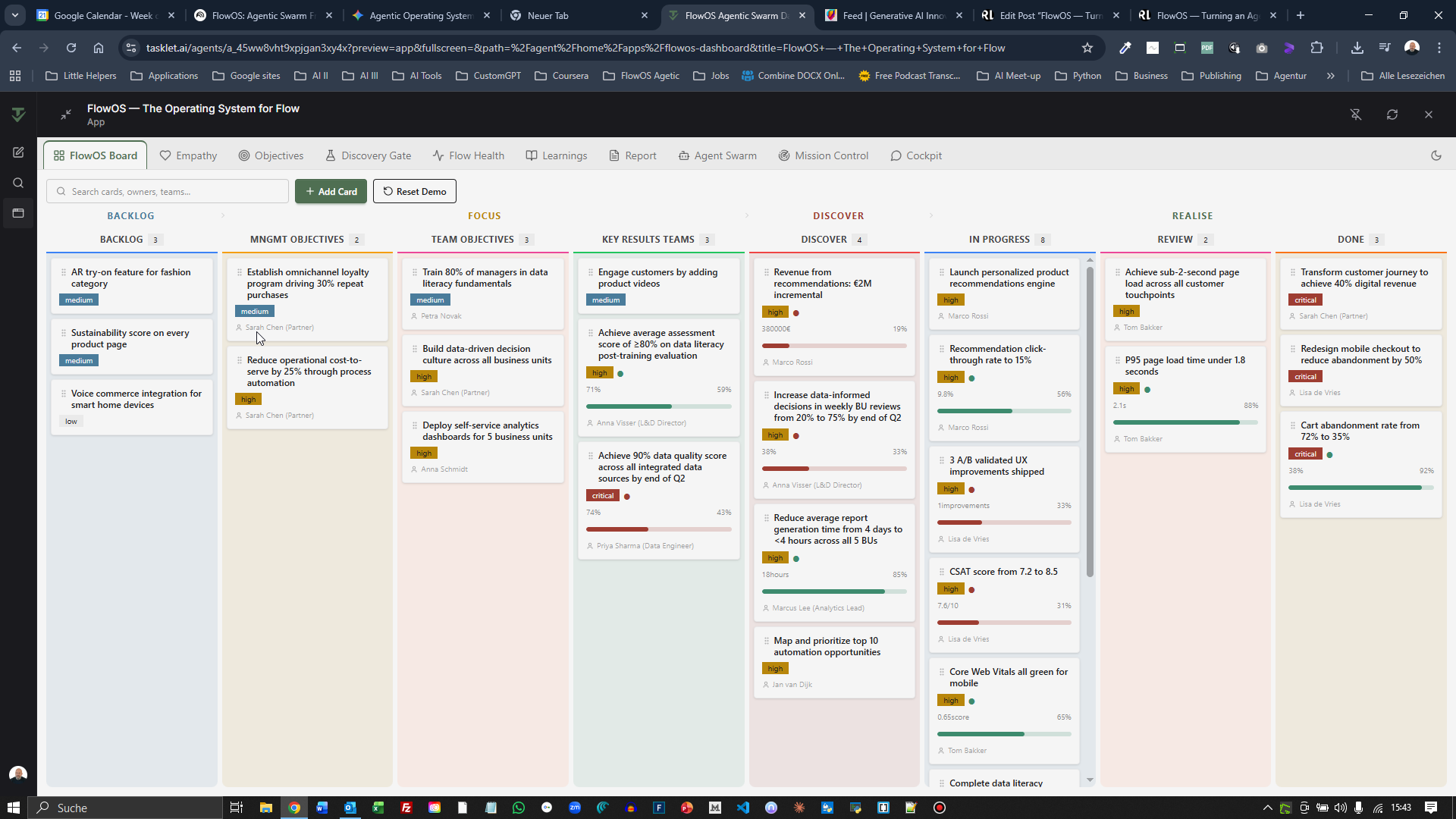

Whether you are in the Cockpit (my custom stakeholder chat) or prompting via Claude, the board updates on the fly. It’s a “Glass Box” OS where every internal agent request is decoupled from the external stakeholder view for maximum professional clarity.

I’d love to hear your thoughts on using Cloudflare Workers to bridge the gap between “prompting” and “production-grade execution”!

If you want to learn about the philosophy behind the framwork and the swarm,watch the video which was generated by NotebookLM, based on Screenshots, My FlowOS book & my book about Agentic Transformation. Enjoy 🙂

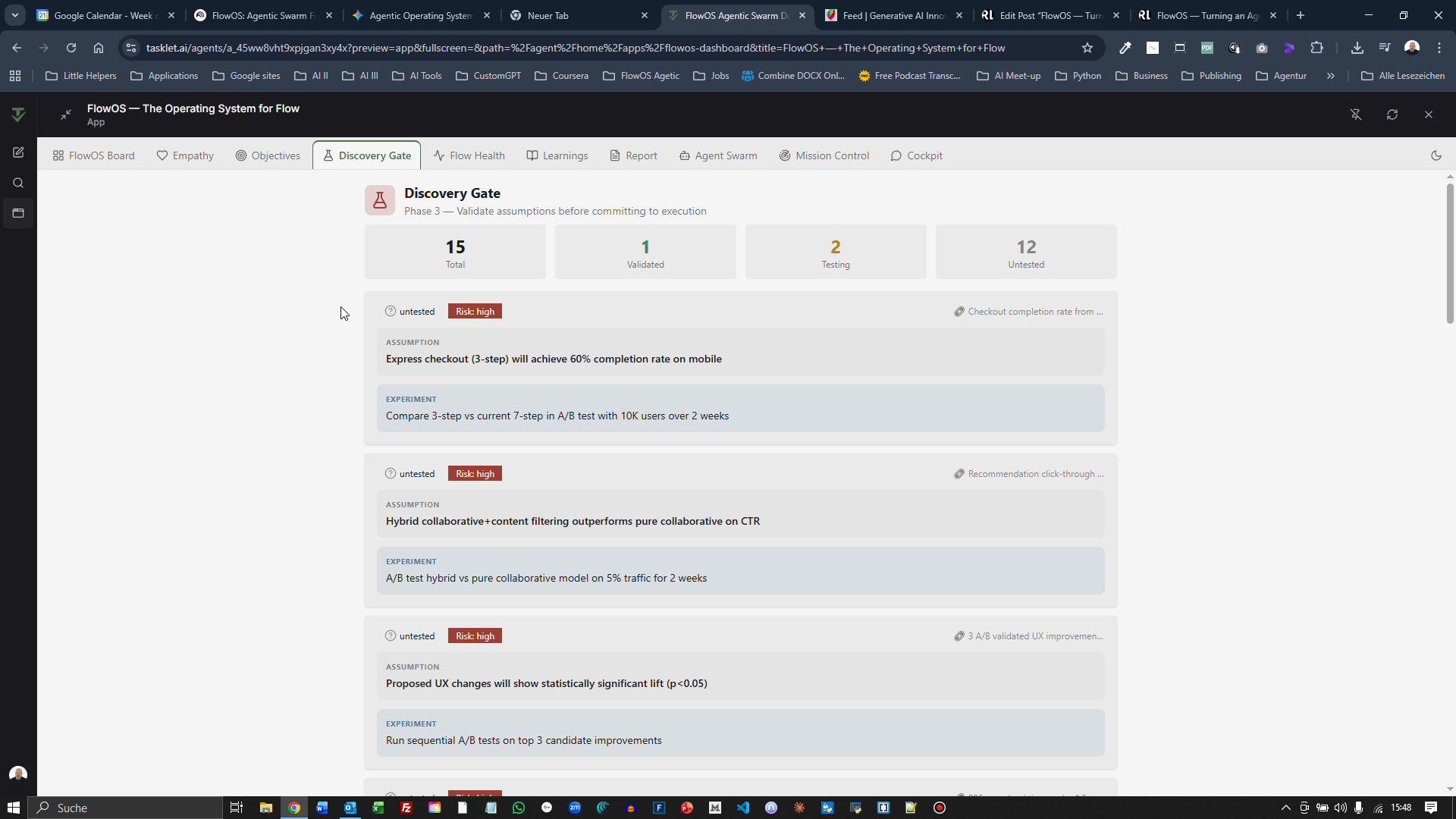

And here I have added some screenshots.

Board

Empathy Lab

Discovery Gate

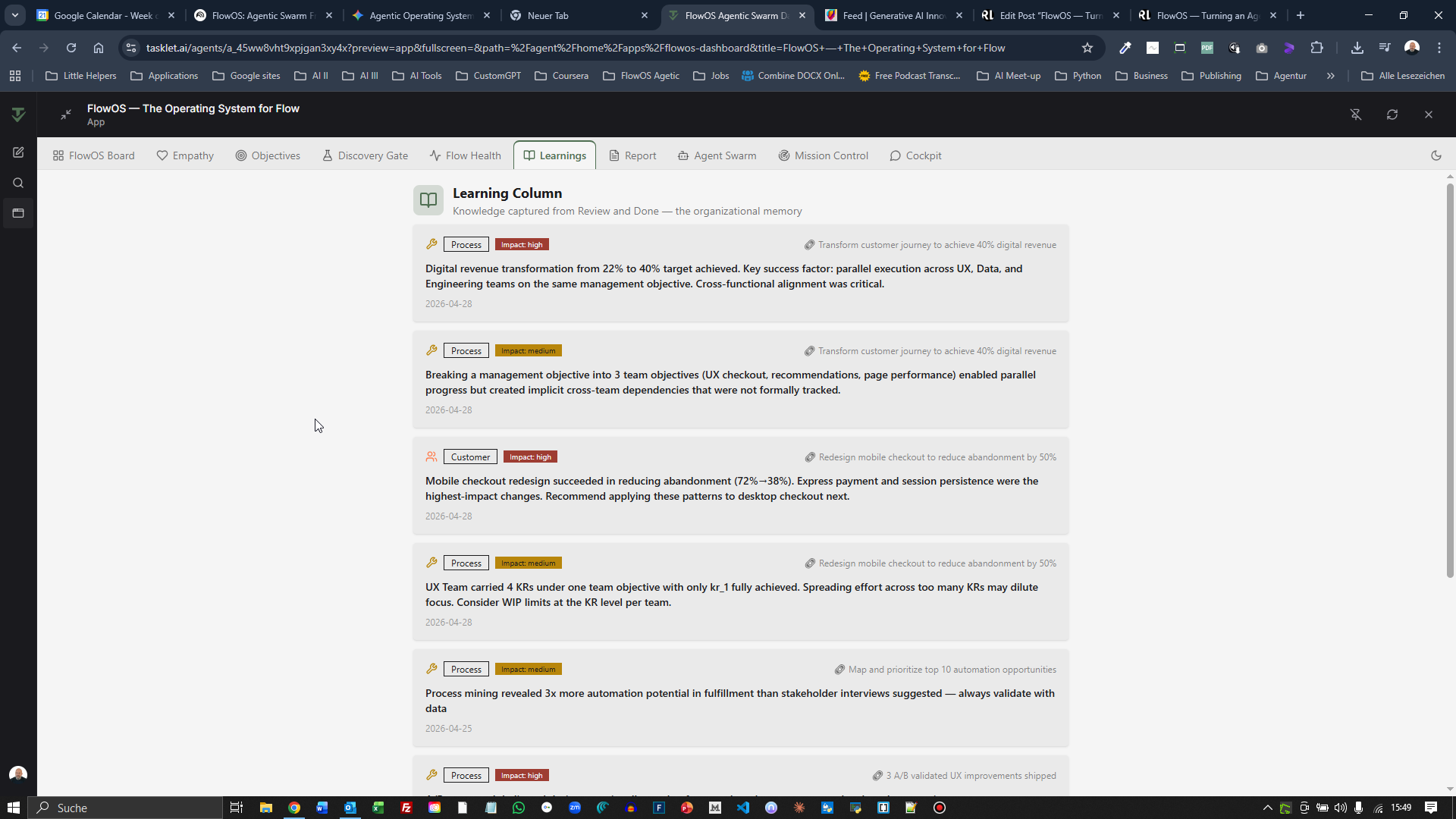

Learning Column

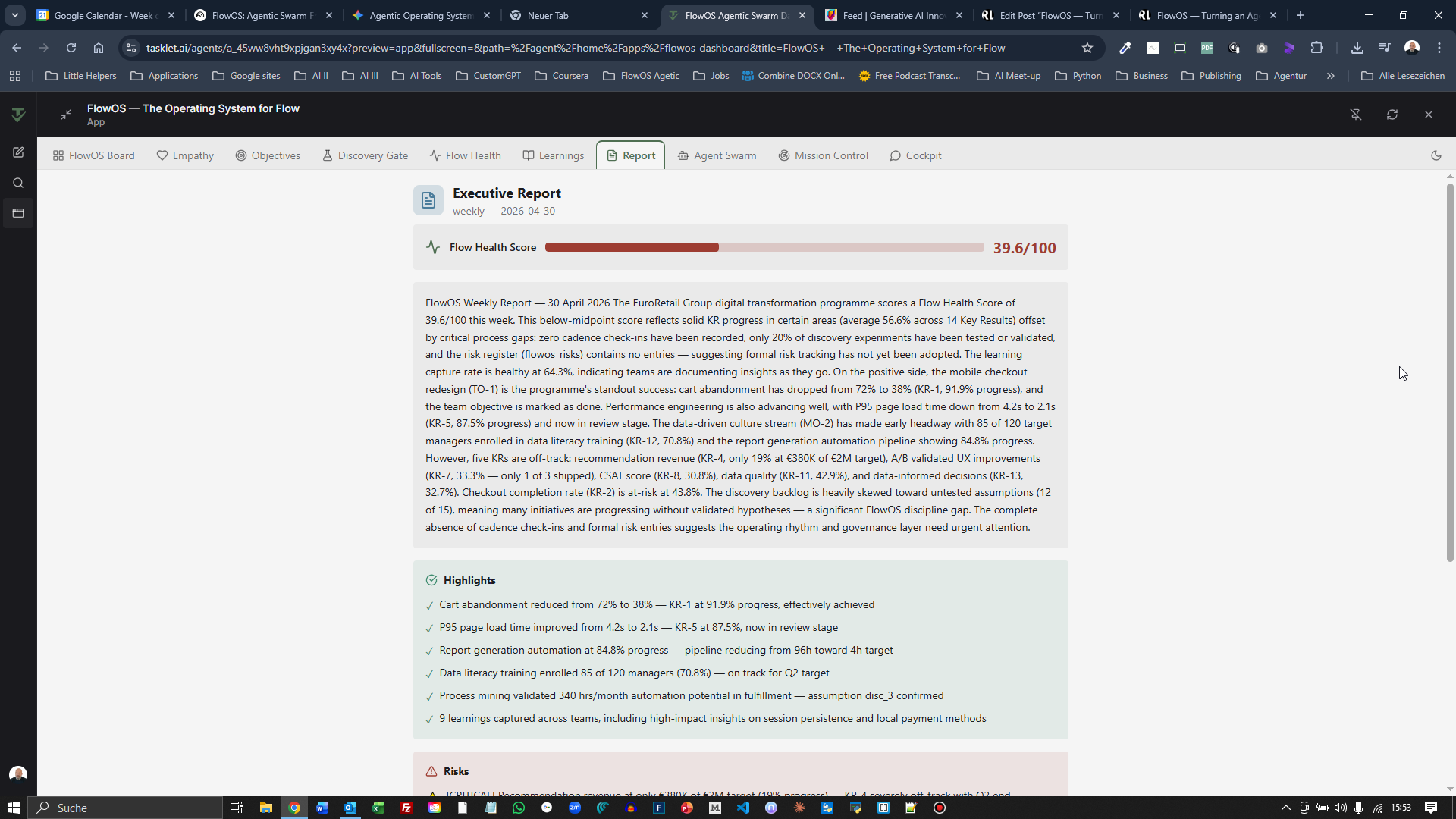

Executive Report

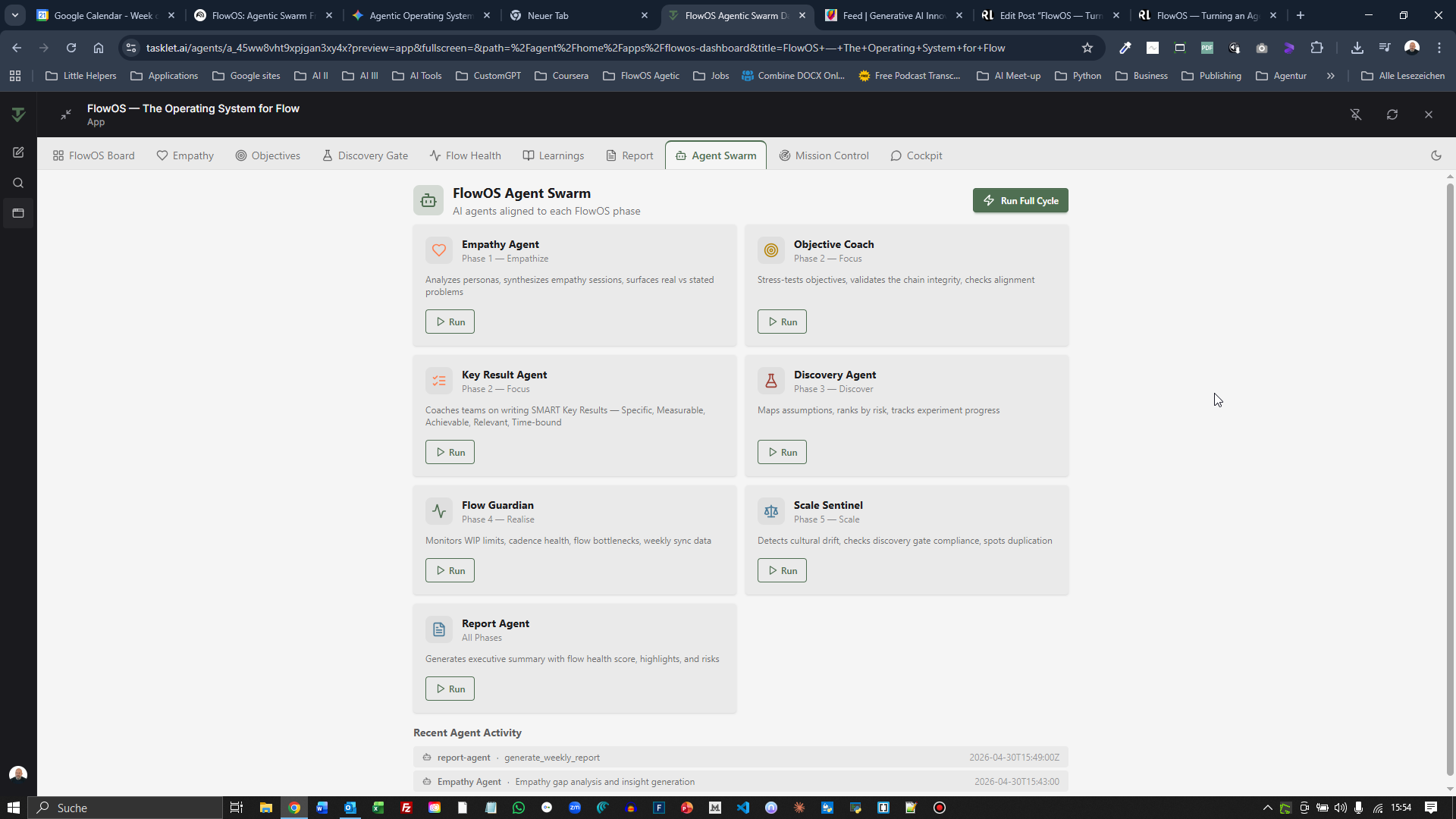

Agentic Swarm

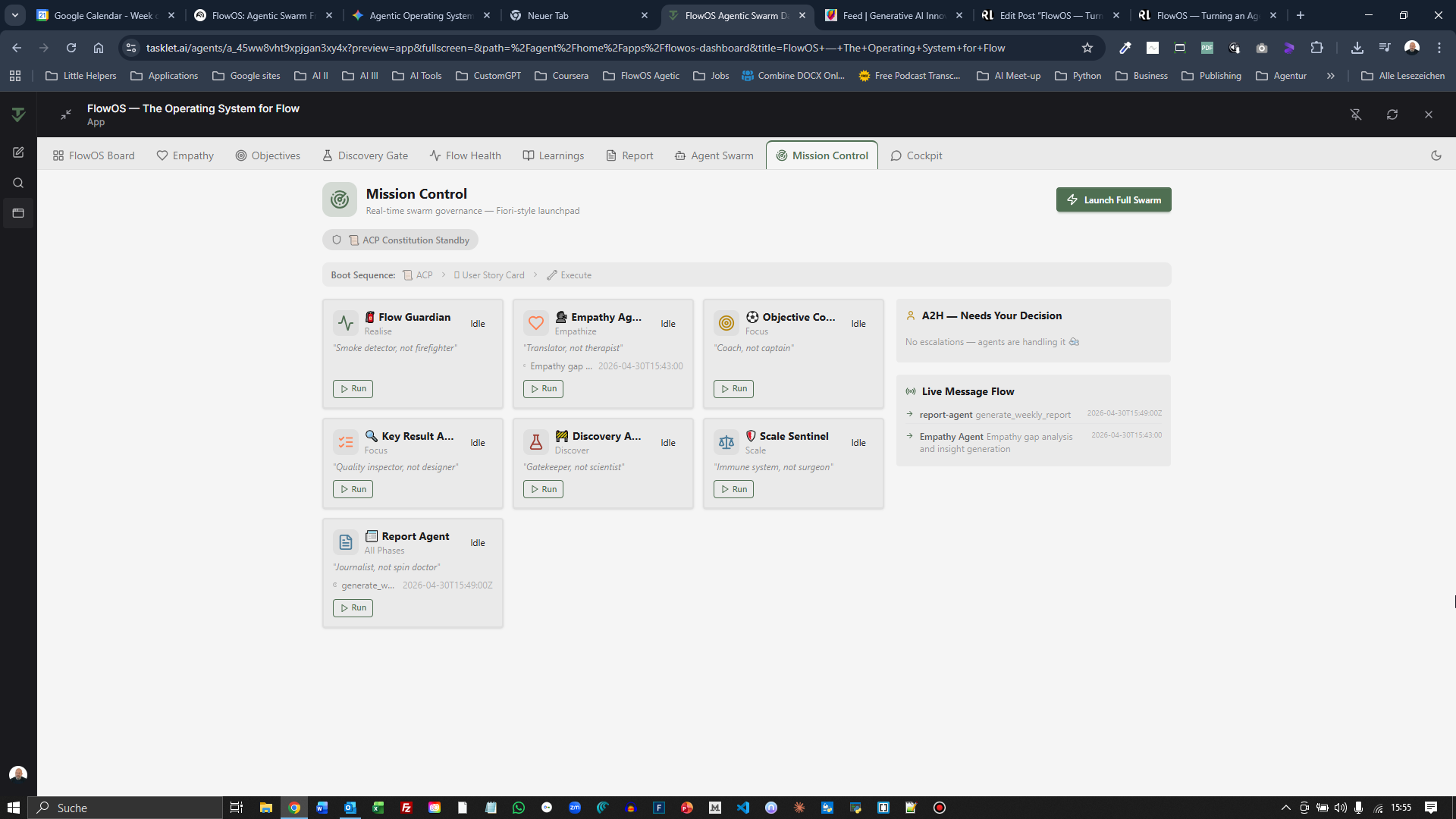

Mission Control

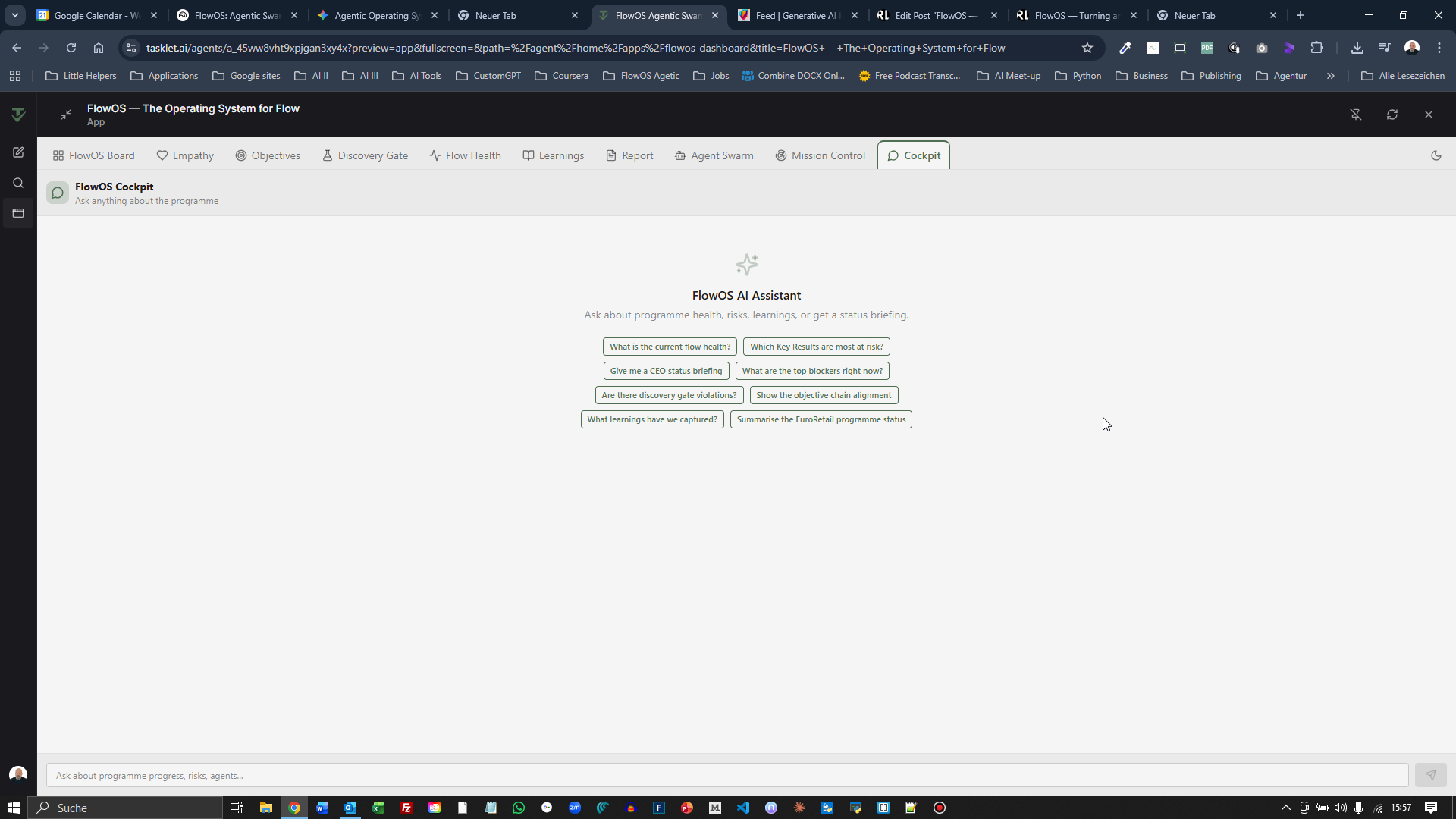

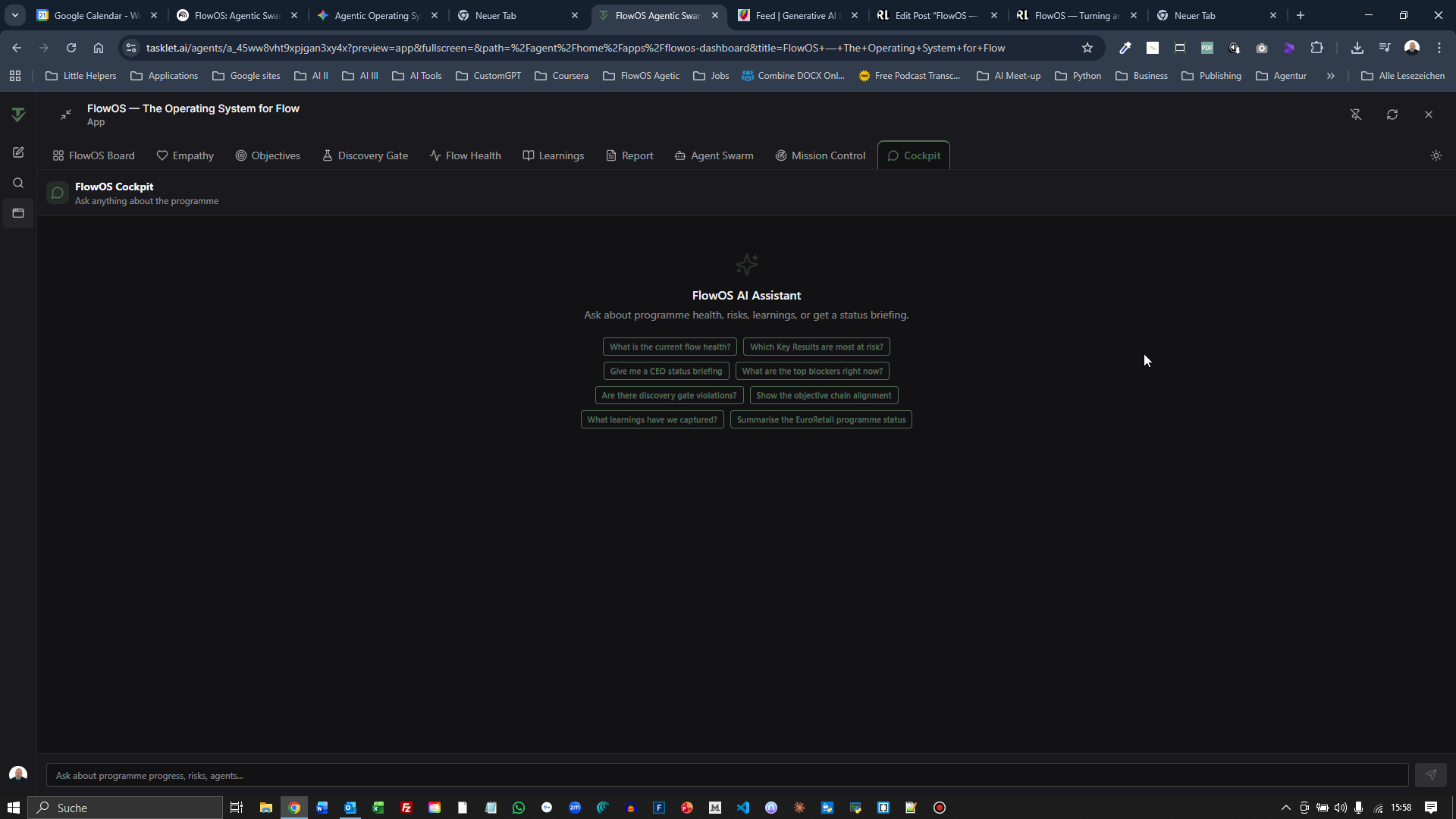

Cockpit/Chat Assistant

Dark View

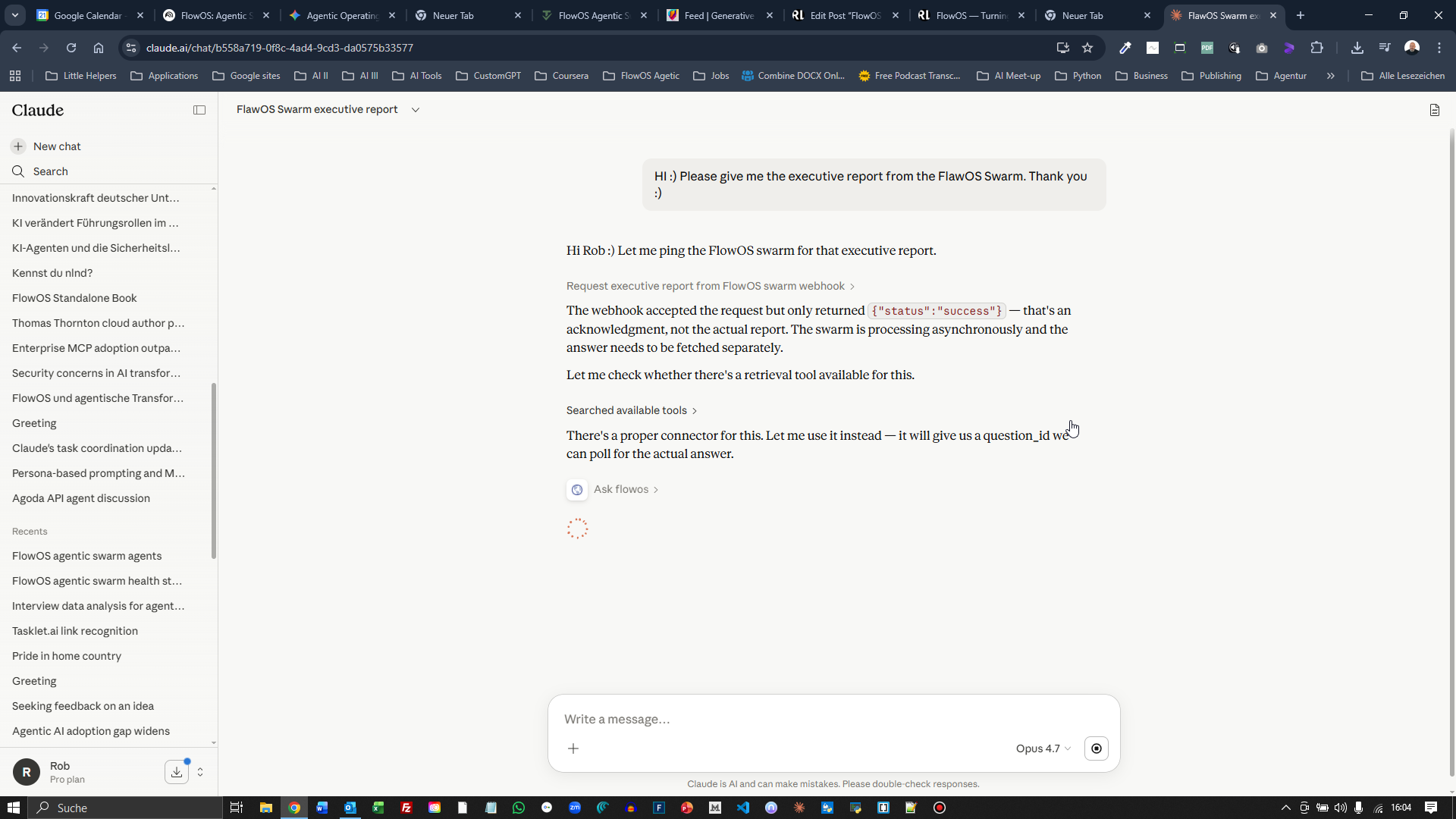

Claude Ai Assistant (connected through Cloudflare)

Chat Copied:

Claude responded: I’ll check with the FlowOS swarm on discovery gate violations for you.

I’ll check with the FlowOS swarm on discovery gate violations for you.

Query FlowOS swarm about discovery gate violations

Query FlowOS swarm about discovery gate violations

The webhook accepted the request but only returned an acknowledgment, not the actual answer. This looks like an async pattern — the swarm needs to process and the answer comes back separately. Let me try the FlowOS connector tools, which likely have a proper “ask then fetch result” flow.

Loaded tools, used Tasklet-Claude-Connector integration

Loaded tools, used Tasklet-Claude-Connector integration

Let me give the swarm a moment and then fetch the answer.

Ran 2 commands, used Tasklet-Claude-Connector integration

Ran 2 commands, used Tasklet-Claude-Connector integration

Here’s what came back from the Discovery Gate Agent — and Rob, this is bad. Not “needs attention” bad. Systemic bad.

The headline

Discovery Gate compliance is 0%. Every single card that moved past Discover did so without validated assumptions. That’s not a process gap, that’s a process that doesn’t exist in practice.

What’s actually broken

9 cards in violation:

Seven critical violations sitting in in_progress — kr_2 (checkout), kr_3 (recommendations), kr_6 (Core Web Vitals), kr_7 (A/B UX), kr_8 (CSAT), kr_9 (analytics dashboards), kr_12 (data literacy training). All running on untested assumptions.

Two high-severity ones: kr_5 sitting in review without its Lighthouse benchmark validated, and kr_1 — cart abandonment — already marked done with zero discovery records. That last one is the worst signal in the report. A KR closed out without evidence means either the team is fabricating outcomes or no one is checking.

Stale experiment: disc_1 (above-fold vs below-fold A/B for kr_4) has been “in testing” since April 15 — 16+ days, no results. That’s Marco Rossi’s team.

Assumption pipeline: 80% untested, 13% testing, 7% validated, 0% invalidated. The zero invalidations is its own red flag — when nothing ever fails a test, it usually means nothing is being seriously tested.

My read — and pushback for you

The swarm gave you tactical recommendations (halt, start experiments, escalate to Tom Bakker). Fine. But if I’m being a peer here: the recommendations treat this as a backlog problem. It isn’t. 0% compliance with a gate that supposedly exists is a governance failure, not an execution one. People are walking past the gate because nothing happens when they do.

Two things worth challenging before you escalate:

- Is the Discovery Gate actually instrumented, or is it aspirational? If kr_1 reached

donewith no discovery records and no one flagged it on the way, the gate is decorative. Telling Tom to enforce it means nothing without a mechanism that blocks movement, not just measures it after the fact. - Retroactive documentation for kr_1 is a trap. The swarm suggests it. I’d push back. Manufacturing evidence after the outcome teaches the team that the gate is a paperwork exercise. Better to mark it explicitly as “shipped without discovery — audit only” and let it stand as the cautionary example.

Want me to ask the swarm to draft the actual escalation message to Tom Bakker, or pull the Flow Coach’s view on why the gate is being bypassed culturally?

I was a bit dissapointed by the result. Not about the topic, bu the speed, adnd therefore I asked Taskade about the reasons. Here’s what he told me:

Behind the Curtain: What 30 Seconds Really Means

When you ask the swarm a question, the answer takes ~25-30 seconds. That’s not lag — that’s governance in action.

Here’s what happens behind the curtain every time an agent wakes up:

Compare: Vanilla Claude answers in 2 seconds — and once hallucinated 8 fake agent names when asked about FlowOS. Fast, but wrong.

30 seconds of grounded truth > 2 seconds of confident hallucination.

When I was testing the app, I discovered some issues and I repoerted it to tasklet:

The bugs-and-fixes story is actually stronger than a polished demo:

✅ Discovery Gate — hard block (no bypass, no override, Gemini-approved

✅ PO-style drag-to-reorder (up AND down, persisted to DB

✅ Gate works on both paths (drag-and-drop + CardModal)

✅ Claude ↔ Tasklet MCP Bridge (v1.2, full round-trip, live on Cloudflare)

That’s the full FlowOS trifecta now:

- 🧠 Governance — Discovery Gate with teeth

- ✋ Human in the Loop — PO manual priority

- 🌐 Headless OS at the Edge — Claude as front-end, Tasklet as engine room, Cloudflare as the bridge